Knowledge Base Software for B2B Support: Architecture, API Design, and AI Readiness

Cole D'Ambra

Growth

Last Updated

Published On

Most teams don't struggle to choose a knowledge base. They struggle to choose the right one for how they actually operate.

On the surface, the decision looks straightforward. You compare editors, check how the widget looks, skim the search experience, and make sure articles are easy to publish. For smaller teams, that's often enough. The knowledge base is a publishing tool, and the main job is getting useful content in front of customers quickly.

That assumption starts to break down as support becomes more intertwined with the rest of the product.

Articles need to stay in sync with APIs that change every few weeks. Support agents need live customer context alongside documentation. AI assistants need structured content they can reliably retrieve and reason over. And engineering teams want to plug the knowledge layer into workflows, not manage it as a separate system.

At that point, a knowledge base stops being just a content tool. It becomes part of your support infrastructure.

The problem is that most platforms aren't evaluated that way. Vendor pages emphasize editor UX, theming, and publishing speed. Buying processes focus on what's easy to roll out, not what will still hold up as support complexity grows.

This guide is for teams that are already feeling that shift.

If you're deciding between platforms and care about how your knowledge base integrates with APIs, supports AI workflows, and scales with your support operations, the questions you ask need to change. We'll walk through how different platforms are structured, what capabilities actually matter at scale, and how to test them before you commit.

The Architecture Decision Most Miss

The knowledge base category splits into two fundamentally different architectures, and most teams don't realize they've made a choice until it's too expensive to reverse.

Closed knowledge platforms centralize everything behind a proprietary UI. Search works through a web widget. Articles live in a CMS that exposes no content retrieval API. If the platform ships an AI layer, it's a bundled model you can't inspect, swap, or route around. The experience is polished, and the onboarding is fast, which is exactly why teams choose them at 50 employees.

API-first platforms treat articles, categories, metadata, and search as queryable endpoints. You can GET an article by slug, POST a new article programmatically, run a search query and receive structured JSON with relevance scores. The knowledge layer is composable: you can put it inside a RAG pipeline, pull it into an agent's context window, sync it with your CRM, or expose it through a custom portal built entirely outside the vendor's UI.

The compounding cost of the wrong choice becomes visible around 200-300 employees. A team that chose a closed platform at 50 employees for its editor experience starts running into specific constraints. They can't feed article content into a custom AI agent because there's no content retrieval API. They can't sync article updates to their internal runbook system because there's no webhook on content events. They can't build a deflection flow that surfaces relevant articles in their in-app widget because search only returns rendered HTML. Each of these is individually solvable with workarounds. Together, they make the knowledge base a bottleneck.

Three questions settle the architectural question before you go deeper into any evaluation:

Can you make a GET request to a content retrieval endpoint and receive structured JSON with article body, metadata, and last-updated timestamp?

Can you POST a new article via API without any UI interaction?

Does the search API return relevance scores and article metadata, or does it return rendered HTML?

If the answer to any of these is no (or "contact sales to discuss API access"), the platform's architectural constraints are load-bearing, and you'll spend the next few years working around them.

Structuring Knowledge for Human Agents and AI Agents

Human agents and AI agents read knowledge bases in entirely different ways. Human agents browse, follow related article links, and hold context across a conversation. AI agents retrieve chunks: they embed a query, find semantically similar passages, and inject those passages into a context window. Article length, heading hierarchy, and internal linking all affect retrieval precision in a RAG context, and the structure that works for human navigation often performs poorly in semantic search.

For starters, the content schema underneath each article matters more than most teams realize. Fields that directly affect AI retrieval quality include:

slug (used for deduplication)

tags (categorical signal for retrieval filtering)

last-updated timestamp (staleness detection)

product area label (scope filtering for multi-product KB queries)

content type enum (distinguishing troubleshooting articles from conceptual explainers from API reference), etc.

Fields that only matter for human navigation (breadcrumb path, "related articles" sidebar) carry no weight in vector retrieval and shouldn't be deliberated over when designing the schema.

Common Failure Cases

A concrete failure case is the "complete guide" article. A 4,000-word article covering account setup, billing questions, API authentication, and webhook configuration will reliably surface for all four query types in keyword search because it contains all four keyword clusters. In semantic retrieval, it performs inconsistently because the embeddings encode a broad semantic footprint.

When an AI agent retrieves the top-3 results for "how do I authenticate API requests," a 4,000-word mixed-topic article competes with four focused articles that each cover one topic in 500-700 words. The focused articles win on retrieval precision because their embeddings tightly represent a single concept.

Staleness is another common failure case, one that produces the easiest-to-miss errors. A retrieval-augmented generation (RAG) pipeline that retrieves an outdated article doesn't return a blank answer. It returns a confident, specific, wrong answer. An article describing an OAuth flow that your product deprecated eight months ago will inject that deprecated flow into every AI-generated response for queries about authentication.

An engineering solution to this issue is a content freshness policy: automated staleness flags on articles that haven't been updated in a configurable window (90 days is a reasonable default for most B2B SaaS products), ticket-correlation triggers that route articles to a review queue when open tickets on the same topic spike, and a review process that gates article publication on explicit last-verified confirmation.

While it is tough to have a knowledge base solution flag "complete guide" cases, it is definitely possible to set up a system that flags stale content for you to review or exclude from public human/bot access.

AI-Powered Search: What the Marketing Means vs. What to Actually Test

Every knowledge base vendor now ships "AI-powered search." The term covers a wide range of implementations, and the differences matter for retrieval quality.

Semantic vs Keyword Search

Most platforms offer two kinds of retrieval: semantic and keyword-based. Semantic search embeds the query using a model like OpenAI's text-embedding-3-large, then finds articles whose embeddings are closest in vector space. It handles conceptual queries well: "how do I migrate my data" matches articles about data export and migration workflows, even if those articles don't contain the exact phrase.

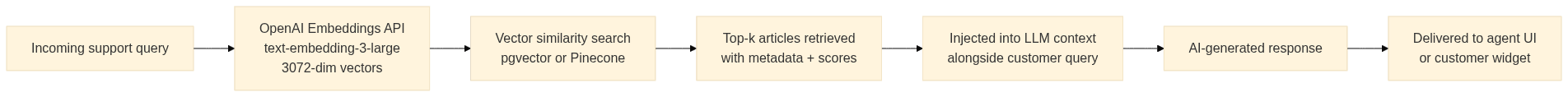

Here's what the flow looks like on a high level:

The overall quality of the pipeline's output depends on article chunking and metadata upstream. Garbage schema in, confident wrong answers out.

On the other hand, keyword search indexes exact terms and handles technical queries precisely: an HTTP 429 error code, a specific CLI flag, an exact field name. Most B2B support knowledge bases need both retrieval modes because the query distribution spans both types. So, when evaluating a platform, you should test whether it supports hybrid retrieval or forces you into one mode.

A quick evaluation method could be to pull 20 real support queries from your ticket history (use your actual unresolved ticket body text, not cleaned-up paraphrases). Run each query against the vendor's search API or search widget. Score top-3 precision: does the right article appear in the first three results? This test takes about 30 minutes and eliminates a large fraction of any shortlist. Platforms that perform well on curated demo content regularly fail on the messy, abbreviated language your actual customers use.

AI Model Lock-in

Another aspect to look at when evaluating AI search is the flexibility that the platform offers to choose your AI model. Most platforms bundle a proprietary LLM for knowledge base Q&A generation. You can't swap the model, can't inspect the retrieval logic, and can't route queries through a model fine-tuned on your specific product domain. When your support workflow depends on accurate technical answers about a complex API, that constraint is significant.

Plain's BYOA (Bring Your Own Agent) capability decouples the knowledge retrieval layer from the inference layer entirely. Your knowledge articles remain accessible via the content API, and you route queries through any agent you choose: a fine-tuned model, a Claude instance with product-specific context, or a custom retrieval pipeline built on pgvector or Pinecone.

The platform holds the knowledge layer while you own the inference. Mintlify runs third-party AI agents directly on Plain's knowledge infrastructure, which is exactly how this architecture pattern looks in production.

Integration Architecture: Wiring Your Knowledge Base Into the Support Stack

Your support stack's knowledge layer becomes actual infrastructure when it fires events, accepts programmatic writes, and exposes search as a queryable API endpoint. That capability determines what you can build on top of it.

Webhooks form the foundation of a live knowledge pipeline. The two most valuable events are article publish and article update. When either fires, you can trigger downstream processes: notify a Slack channel for human review, update a timestamp field in your CRM's knowledge index, or sync the article content to a secondary vector store.

The more sophisticated patterns run in reverse: when your ticketing system detects a spike in unresolved tickets on a topic that maps to an existing KB article, it could trigger an article review workflow. When an agent resolves a novel ticket type with no corresponding KB article, a draft generation workflow could kick off automatically.

Common Integration Patterns

Here's a quick little integration pattern you can use to set up automations that keep your knowledge base updated against incoming support cases:

Another common integration pattern is knowledge injection into live conversations. When a ticket arrives, your support tooling could pass the ticket body as a search query to the knowledge base API, retrieve the top-3 articles by relevance score, and surface those articles in the agent's workspace. For AI-assisted support, the same retrieved content could be injected directly into the AI agent's context window before it generates a response. The latency budget for this pattern should be tight though: anything above 200ms for the search API call creates noticeable lag in the agent UI.

By the way, Plain already offers a built-in feature that offers customer context to agents in chat, called Customer Cards.

Integrating Knowledge Base Updates in Your Dev Workflows

Plain's MCP server can change the authoring model for engineering-heavy teams by exposing support infrastructure as callable tools inside AI-powered IDEs like Cursor and Claude's desktop app. A support engineer working in Cursor can query the knowledge base for existing articles on a topic, identify content coverage deltas, and push a new article draft directly from their IDE without switching to a browser.

For teams where engineers contribute frequently to knowledge content (API documentation, technical troubleshooting guides, SDK reference), this eliminates the context-switching cost that makes KB authoring feel like a separate job from engineering work. When the people who know the content best can update it in the tools they already use all day, you get the same benefit infrastructure-as-code delivers for configuration management.

Scaling the Content Pipeline: From 50 Articles to 5,000

The content volume problem is a systems problem. You can't manually curate 5,000 articles with a two-person support team. The teams that scale their knowledge bases successfully treat content creation as a pipeline with automated triggers, human review gates, and data-driven prioritization.

Most automated article generation workflows roughly follow the same concrete pattern:

Cluster your resolved tickets by topic using the same embedding model you use for KB search (consistency in embedding models matters because the semantic space needs to be shared).

Flag topic clusters where the cluster size exceeds a threshold (say, five tickets in a 30-day window) and no KB article covers the topic.

Feed those flagged clusters into a draft generation workflow, where a prompt that includes the ticket content and resolution notes produces a draft article. 4 Finally, route the draft to a human review queue.

The automation handles content discovery and first-draft generation while your team owns accuracy and quality judgment.

Controlling Access at Scale

RBAC for technical B2B knowledge is non-negotiable above a certain customer count.

Internal runbooks (API incident procedures, escalation playbooks) should never be customer-accessible. Enterprise-tier onboarding guides should be visible to enterprise accounts but gated from self-serve users. API reference material might need to be scoped by an authentication token so that paying customers see their plan's full API surface while trial users see a subset.

The architectural requirement is that access control is enforced at the API layer, not the UI layer alone. A KB platform that applies RBAC only through its widget but returns full article content to any authenticated API call has a leaky access control model that creates exposure as your customer base grows.

Content Coverage Analysis at Scale

Content coverage analysis at a large scale is tough to do with just editorial judgment. Quantitative analysis becomes quite important at this stage.

A good point to start is to calculate the deflection rate per article by measuring the fraction of users who view an article and then do not open a contact form. High-traffic articles with deflection rates below 40% signal content that customers read but find insufficient (the article exists, but doesn't resolve the question). Articles with zero views in the past 90 days, despite ongoing ticket volume on the same topic, signal content that customers can't find through search. The two deltas require different responses: the first needs content revision, and the second needs metadata and slug improvements to make it more discoverable.

CIs like GitHub Actions provide a practical automation layer for teams that treat knowledge management as code. A workflow triggered on a schedule can query the KB's article index, cross-reference it against the last 30 days of ticket topic clusters, and output a coverage report to a Slack channel or a GitHub issue. Mintlify's documentation-as-code model shows what this looks like for developer documentation: content lives in Git, changes go through pull request review, and publication is a CI step. For customer-facing support knowledge, the same model works cleanly when the knowledge base exposes a write API.

Platform Selection Framework for Technical Teams

The evaluation matrix that actually matters for engineering-led teams runs five criteria, in priority order.

1. API Coverage

Can you read, write, and search articles programmatically? Does the platform return structured JSON from search with relevance scores and full article metadata? This is the foundation. Without it, every other capability in this list is blocked.

2. AI Flexibility

Does the platform lock you into a proprietary LLM, or can you route queries through your own agent? BYOA-capable platforms let you build a RAG pipeline on top of the knowledge layer using your own embedding model, your own vector store, and your own inference endpoint.

Platforms with bundled-only AI fix their retrieval logic, their model's limitations, and their inference costs as constants you work around.

3. Content Schema Control

Can you add custom metadata fields to article objects? Product area labels, content type enums, audience-tier tags, and custom staleness signals all require schema extensibility. A platform that restricts you to a fixed set of article fields (title, body, category) constrains every downstream retrieval filter you'll want to build.

4. Integration Depth

Does the platform provide native webhooks on content events, or do you need a middleware layer to detect changes? Native webhooks on article publish, article update, and article delete are the minimum. Platforms that require you to poll an API endpoint for changes add latency and operational overhead to every integration.

5. Access Control at the API Layer

Does RBAC apply to API responses or only to the UI widget? Test this directly during your evaluation. Make an API call with a scoped authentication token that should only return public articles, and confirm that internal or tier-restricted articles don't appear in the response.

Build vs Buy?

The build-vs-buy decision follows from where your knowledge requirements sit relative to what API-first managed platforms offer. You should build a custom knowledge infrastructure only when your content is generated directly from code artifacts or live system state (a knowledge base auto-generated from your OpenAPI spec, for example).

You should consider buying a managed platform when your team lacks the bandwidth to maintain a custom search layer and standard KB functionality covers your use case. You could also extend an API-first platform when you need standard KB functionality plus custom agent integrations. Most B2B SaaS teams between 50 and 500 employees land here.

Non-negotiables

Four conditions should trigger rejection of any platform during evaluation:

No public content API

AI search that can't be replaced or augmented

No webhook support for content events

Per-seat pricing that penalizes programmatic access

That last one matters because automated workflows that create or update articles at scale should carry different pricing than a human editor seat.

Run This Audit on Your Current Knowledge Base Today

As an actionable takeaway from this article, run an audit on your current knowledge base. This audit should take under an hour, and it should tell you whether your current platform can support the architecture in this guide, or whether migration belongs on the roadmap.

The first test is content retrieval. Make a GET request to your KB's content API (check the docs for the articles endpoint). Confirm the response includes the article body as plain text or markdown, metadata fields, and a last-updated timestamp. If the response is rendered HTML or if no content API exists, that's a migration trigger.

The second test is programmatic article creation. POST a test article via API. If success requires any UI interaction, the write API is incomplete for automation purposes.

The third test is search API quality. Pull 10 real support queries from your ticket history and run each against the search API. Inspect the raw JSON response: check whether relevance scores are included, whether the right article appears in the top 3, and whether the response contains structured metadata or only article IDs.

The fourth test is custom metadata fields. Try adding a product area label and a content type enum to an article object via API. If the schema is fixed beyond title, body, and category, you'll work around that limitation every time you build a retrieval filter.

The fifth test is webhook events. Publish and then update a test article. Confirm that webhook events fire on both actions and that the payload includes article ID, slug, and last-updated timestamp. Then, archive the article and confirm that a delete event fires.

The sixth test is API-layer access control. Create two authentication tokens with different scope claims. With the restricted token, run a search API call that should exclude internal articles. If the restricted token's response includes content that it shouldn't reach, your access control model applies only at the widget layer.

A platform that passes all six tests has the surface area to support the architecture described throughout this guide. If yours fails on two or more, the platform is constraining capabilities that compound over time. The previous section gives you insights to evaluate and choose the right replacement.

If real stories matter to you, n8n scaled to 20x ticket volume with a team that only doubled by building on support infrastructure that could keep up with that growth. The knowledge layer was part of that infrastructure.

Choosing your support platform deserves the same engineering rigor you'd apply to any other API-dependent system in your stack.

See how Plain's API-first knowledge base fits into your support stack, and bring your own AI agent to it. Book a demo today.